Building Generative AI Fabrics

Dell PowerSwitch (Ethernet) vs. NVIDIA InfiniBand (Quantum) — A deep dive into DCQCN, PFC, SHARP, and the 800G fabric technologies powering trillion-parameter AI training.

1. The AI Fabric Challenge: Why Traditional Networks Break

Data center traffic patterns have pivoted from standard North-South client-server exchanges to the heavy East-West demands of distributed GPU clusters. Training trillion-parameter models requires constant synchronization of gradients and weights across thousands of nodes. Traditional IP networks, designed with oversubscription and "best-effort" delivery, cannot handle the resulting incast congestion.

These massive workloads require a strictly lossless environment. In a standard network, packet loss triggers retransmissions that cause latency spikes and head-of-line blocking. For AI, this network jitter directly stalls GPU all-reduce operations. If the network cannot maintain deterministic throughput, expensive compute cycles are wasted waiting for data synchronization, effectively extending training times by weeks.

High-performance fabrics must now provide massive East-West bandwidth while eliminating the tail latency that bottlenecks the entire compute pipeline.

2. The Ethernet/Dell Approach: Enterprise SONiC and RoCEv2

Dell Technologies utilizes an open networking strategy to build high-scale IP fabrics. This approach centers on disaggregated hardware and the Enterprise SONiC operating system, allowing architects to maintain familiar Linux-based management workflows while scaling to the 800Gbps tier.

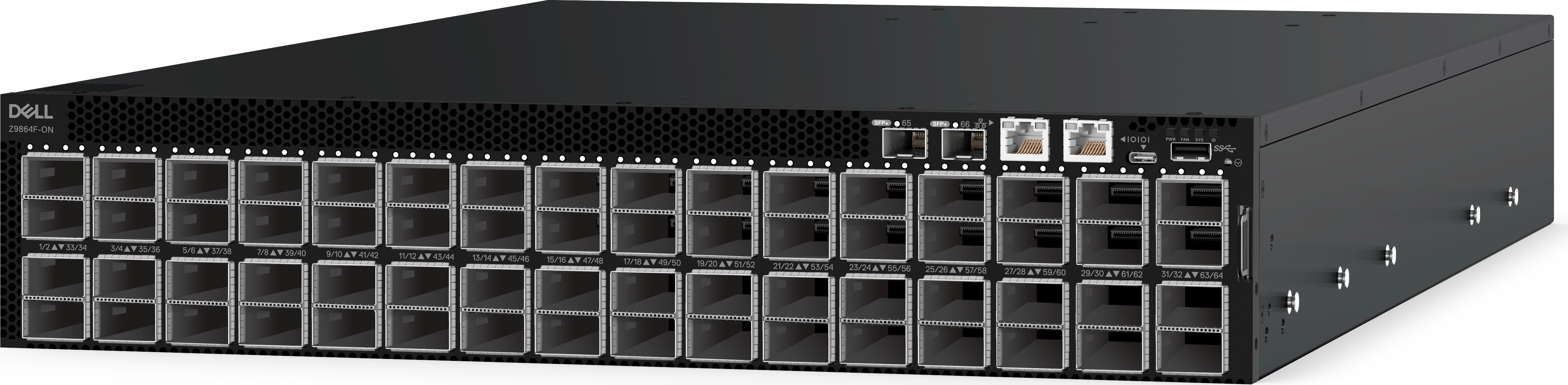

The Dell PowerSwitch Z9864F-ON is the primary heavy-lifter for these fabrics. It is built on a monolithic 5nm die package for maximum power efficiency and delivers 102.4Tbps switching capacity (full duplex). The switch provides 64 ports of 800GbE in an OSFP112 form factor using PAM4 signaling. This contrasts sharply with the S4100 and S5200 series, such as the S4112T-ON, which are limited to 10GbE (via 10GBase-T) or 100GbE (QSFP28) and serve primarily as management connectivity or entry-level aggregation.

To ensure the "lossless" performance required for AI, Dell implements a specialized Ethernet stack:

Priority-based Flow Control (PFC)

Eliminates packet loss by pausing traffic on specific queues during congestion, ensuring a lossless transport layer for RoCEv2 traffic.

Data Center Bridging Exchange (DCBX)

Automates the configuration of lossless parameters across the fabric, ensuring consistent behavior from leaf to spine.

RoCEv2 (RDMA over Converged Ethernet)

Enables Remote Direct Memory Access, bypassing the CPU to allow direct GPU-to-GPU memory transfers at line rate.

Enhanced Transmission Selection (ETS)

Manages bandwidth allocation to prevent standard IP traffic from starving AI flows, ensuring deterministic throughput for training jobs.

Dell manages this via Enterprise SONiC, which provides a programmable NPU pipeline through NPL (Network Programming Language). This enables advanced features like adaptive routing and cognitive routing, which mitigate incast congestion by dynamically steering flows around hotspots. Furthermore, the integration with Dell SmartFabric Manager and support for NVMe/TCP allows architects to unify AI compute and high-performance IP storage into a single managed fabric.

3. The InfiniBand/NVIDIA Approach: Quantum-2 and Quantum-X800

InfiniBand remains the "native" language of high-end GPU clusters, engineered specifically for the low-latency, high-throughput requirements of In-Network Computing. The NVIDIA platform minimizes the overhead found in standard IP headers and offloads collective communication tasks directly to the switch silicon.

The portfolio currently centers on the Quantum-2 (NDR 400G) and the Quantum-X800 (800G). The Q3400-RA (air-cooled) switch provides an aggregate throughput of 115.2Tb/s through a high-radix design featuring 144 ports of 800Gb/s via 72 OSFP cages. Unlike Ethernet, InfiniBand uses credit-based flow control at the hardware level, ensuring a naturally lossless environment without the configuration complexity of PFC.

SHARP: In-Network Computing

A critical performance driver is the Scalable Hierarchical Aggregation and Reduction Protocol (SHARP):

- SHARPv3 (Quantum-2)

- Supports 64 parallel flows, providing 32X higher AI acceleration power than previous generations. Available on the QM9790, QM9701, and QM9700.

- 4th Gen SHARP (Quantum-X800)

- Introduces FP8 precision and offloads collective operations like ReduceScatter and ScatterGather to the switch fabric. This reduces the data traversing the network and can boost application performance by up to 9X. Available on the Q3401-RD, Q3400-RA, and Q3200-RA.

For reliability, InfiniBand employs self-healing technology and telemetry-based congestion control to provide nearly perfect effective bandwidth. These mechanisms offer performance isolation, ensuring that multiple jobs or tenants within a cluster do not suffer from "noisy neighbor" interference—a vital requirement for deterministic training at scale.

4. Physical Architecture: Rail-Optimized Topologies and CPO

In massive GPU clusters, the physical topology dictates the maximum job size and power footprint. High-radix switches allow for "flatter" networks, reducing the hop count and the number of active components required to connect thousands of nodes.

NVIDIA leverages Rail-Optimized and Fat Tree topologies to maintain job locality. The high radix of the Quantum-X800 supports a two-tier Fat Tree capable of connecting 10,368 NICs at 800Gb/s speeds. This density minimizes the number of switch tiers, slashing both latency and cable complexity.

Co-Packaged Optics (CPO)

Physical efficiency is further improved via Co-Packaged Optics (CPO) in the Quantum-X800 Q3450-LD:

63X Signal Integrity

CPO integrates silicon photonics with the ASIC, reducing the electrical path to millimeters.

4 dB Insertion Loss

A massive improvement over the 22 dB typical of pluggable transceivers.

85% Liquid Cooled

Significantly lowering TCO in high-density AI Factories by reducing thermal management energy.

5. The Verdict: Strategic Recommendation

The choice between Dell Ethernet and NVIDIA InfiniBand depends on the specific scale of the AI workload and the existing data center ecosystem. Both platforms have converged at the 800G speed tier, but their operational philosophies remain distinct.

Choose Ethernet (Dell PowerSwitch)

- Existing Talent: Leveraging SONiC and Linux-based management skills to avoid the learning curve of specialized InfiniBand fabrics.

- Multi-Vendor Flexibility: Utilizing a disaggregated model with hardware like the Z9864F-ON to avoid proprietary vendor lock-in.

- Unified Storage: Integrating compute nodes with NVMe/TCP storage and managing the lifecycle through Dell SmartFabric Manager.

Choose InfiniBand (NVIDIA Quantum)

- Maximum Training Efficiency: Achieving the absolute lowest tail latency for trillion-parameter training jobs.

- In-Network Computing: Utilizing SHARP offloads and FP8 precision support to maximize GPU utilization.

- Deterministic Scale: Building massive clusters of 10,368 NICs or more with guaranteed performance isolation and hardware-based self-healing.

Products Referenced in This Guide

PowerSwitch Z9864F-ON

64x 800GbE • 51.2 Tbps • Enterprise SONiC

PowerSwitch Z9664F-ON

64x 400GbE • 25.6 Tbps • Enterprise SONiC

PowerSwitch Z9432F-ON

32x 400GbE • 12.8 Tbps • Enterprise SONiC

Quantum-X800 Q3401-RD

144x 800Gb/s • 115.2 Tbps • 4th Gen SHARP

Quantum-X800 Q3400-RA

144x 800Gb/s • 115.2 Tbps • Air-Cooled

Quantum-X800 Q3200-RA

72x 800Gb/s • 57.6 Tbps • Dual-Switch 2U

Quantum-2 QM9790

64x 400Gb/s • 25.6 Tbps • SHARPv3

Quantum-2 QM9700

64x 400Gb/s • 25.6 Tbps • NDR

PowerSwitch S4112T-ON

12x 10GBase-T • 840 Gbps • Management / Edge

Need help designing your AI fabric?

Our networking specialists can help you evaluate the right platform for your workload.

Request a Quote Talk to a Specialist